Figure 1: an AMMR needs to identify check and carefully harvest the fruit without damaging it

From logistics to manufacturing and from healthcare to smart buildings applications, machines are expected not just to follow instructions, but to adapt, perceive and make decisions in highly dynamic environments.

A strong example of this physical AI is the rise of mobile manipulators, also known as autonomous mobile manipulator robots (AMMRs). They combine the mobility of autonomous platforms with the dexterity of robotic arms and they are beginning to be adopted for applications such as warehousing, logistics and agriculture, where they can perform a range of tasks and adapt to these dynamic environments. They can be used for everything from simple pick-and-place tasks to more complex operations such as the identification and harvesting of crops on a farm.

In 2023 the AMMR market was worth $385.9m, according to Grand View Research, with approximately 85% split across assembly (~30%), inventory management (~25%), transportation (~20%) and sorting (~10%).

Mobile manipulators navigate environments and interact with objects, enabling the automation of repetitive or hazardous work. They are also being looked to for more complex tasks, including elderly care support. This sector is set to grow at a CAGR of 23.9%, giving the market a forecasted value of $1.7bn.

Sensors

As with all autonomous systems, mobile manipulators require a combination of perception, planning and action. This requires data from a broad suite of sensors being fed to a processor capable of running advanced AI models directly on the device.

Vision cameras are essential. Depending on the tasks and environment, they can be either mounted on the robot or positioned externally to the robot – in close proximity or to stream data to the robot from a farther location.

Additional sensors are often integrated into AMMR systems to augment camera perception data. For example, depth sensors, including lidar and time-of-flight cameras, plus short-range ultrasound sensors, help with distance estimation and obstacle detection, while microphones allow audio prompts to be used.

Those multi-modal inputs are then fused into a coherent understanding of the environment. That comprehensive input then guides an AMMR to act accordingly.

Vision language action models

For interpretation of these data, an intelligent language model is often required. A growing trend in AMMRs is the use of vision language action (VLA) models. VLAs go beyond object recognition or navigation by combining perception, language and motor control in a single framework. These take the spoken (or written) prompt, then initiate the chain of reasoning to allow the AMMR system to interpret the commands and action them.

Examples of VLAs are coming from both research and open-source communities, such as the RT-2 from Google.

These systems often require large-scale pre-training on diverse datasets, combining natural images, video and textual descriptions. For example, RT-2 trained its VLA models by combining vision-language model (VLM) pre-training with robotic data. That robotic data consisted of a set of 6k robotic evaluation trials in a variety of conditions, for which they primarily used a 7DoF mobile manipulator robot. The conclusion was that this approach led to not only very performant robotic policies, but also a significantly better generalisation performance and emergent capabilities inherited from web-scale vision-language pre-training.

Once trained, the inference stage can be deployed on-device, but it demands significant processing power. VLA models are computationally intensive because they integrate vision, language and action, often using large transformer-based models, which require temporal and spatial reasoning, and produce structured, multi-step outputs. This makes efficiency critical: without tailored hardware, the power and memory requirements would be prohibitive for a mobile platform.

For instance, to process a simple prompt such as “bring a cup of water,” the system must recognise speech input, convert it into text, interpret the task, locate the cup in the environment using object detection, navigate around obstacles and co-ordinate the arm to grasp and transport the object. The computing platform must manage multi-modal sensor fusion, large model inference and real-time control concurrently, hence the importance of efficient AI acceleration at the edge.

Processing

A typical approach to providing this processing capability is to use a general-purpose embedded processor. Developers accustomed to the tools often default to using them for the entire development flow. But while training large models still requires general-purpose and large processors, running inference at the edge does not, and there are more efficient methods of tackling the latter.

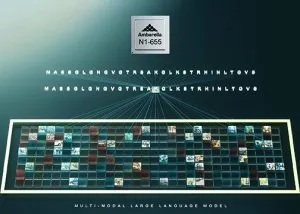

Figure 2: dedicated SoCs improve thermal management. The Ambarella N1-655 SoC integrates a CVflow AI accelerator alongside its dedicated image processing pipeline, enabling it to process LLMs and VLMs with up to 8bn parameters while decoding 12 streams of 1080p30 video with low power consumption.

General-purpose processors are designed to cover a wide range of applications with significant raw processing power, but this generality can create inefficiencies in edge AI deployments. High power draw leads to shorter battery life, and the need for active cooling adds bulk and mechanical complexity. Bottlenecks arise because general-purpose processors are often not optimised for running perception and AI inference in parallel, meaning that resource contention between tasks can reduce performance. Frequent calls to external DRAM also increase latency and power consumption.

A better approach is to use a purpose-built SoC with integrated AI accelerators, perception engines and CPUs for general tasks. They are specifically built for edge AI applications, following an ‘algorithm-first’ approach in which the hardware is designed to support the latest AI workloads most relevant to the target use case. The use of tailored SoCs will deliver higher efficiency and reliability.

Dedicated SoCs will have been designed to ensure that different workloads are handled by specialised engines in parallel. This means vision processing can be offloaded to an imaging pipeline, inference workloads to an integrated AI accelerator and navigation or control logic to the CPU. This separation reduces contention and avoids the bottlenecks common in general-purpose processors, where multiple tasks compete for the same resources.

Another key architectural choice is in minimising calls to external DRAM. VLAs are typically large, requiring the use of off-chip memory. Accessing that external DRAM consumes significant power and adds latency. This is especially true when large models such as VLAs are running alongside real-time perception. To minimise these issues, purpose-built SoCs incorporate high-bandwidth on-chip SRAM and memory hierarchies that are optimised for reusing intermediate data. This improves efficiency per Watt and allows sustained performance without thermal throttling.

General-purpose processors typically require active cooling and larger batteries. This not only increases the robot’s size (and decreases its manoeuvrability), but also creates noise and brings in potential points of failure with fan wear and tear. Efficient dedicated SoCs can often rely on passive cooling and smaller batteries, improving thermal management and bill of materials.

Mobile manipulators illustrate the broader shift in automation towards context-aware, flexible and intelligent systems. Combining mobility with manipulation expands the range of tasks that robots can perform, yet the ability to deliver these capabilities depends on more than model design.

The efficiency of memory hierarchies, the balance of power across locomotion, manipulation and compute, and the practicality of thermal management all determine whether a system will succeed outside controlled environments.

There is also an ecosystem challenge. Most AI development remains general-purpose processor-centric because training is anchored in datacentres. Moving to inference-optimised SoCs for physical AI at the edge requires changes to toolchains and habits as much as hardware, but the benefits are more than worth it. How the industry addresses these challenges will help determine the rate at which AMMRs transition from promising prototypes to widely deployed systems.

Electronics Weekly

Electronics Weekly