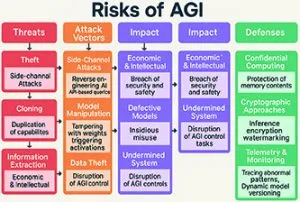

Figure 1: a structured view of AGI risks across threats, attack vectors, likely impacts and defensive controls

In the race to develop artificial general intelligence (AGI), most of the focus has been on scaling: building larger models, utilising more data and deploying bigger clusters. As capabilities grow, another critical question arises: what if someone steals the AGI model? Unlike traditional software breaches or isolated data leaks, revealing an AGI system’s weights, architecture or even its intermediate activations during inference can have irreversible consequences. Whether an attacker is cloning the intelligence, reprogramming it for malicious purposes or extracting sensitive information from training data, the security risks surrounding AGI are real and fundamentally different from traditional cybersecurity threats.

Imagine investing billions of dollars and years of research to develop a general-purpose reasoning machine, only to have a well-funded adversary replicate it within hours or days.

An increasing amount of research shows that models can be stolen through side-channel attacks, reverse engineering and application programming interface (API)- driven extraction. By carefully probing inputs and analysing outputs attackers can deduce model behaviour and gradually rebuild capabilities. With AGI the stakes rise sharply because intelligence itself becomes a scalable weapon.

Vulnerabilities

The main problem is that AGI systems are more than just software. The model weights learnt from large datasets encode skills. If those weights are copied an attacker could access the model’s reasoning ability while bypassing safety layers, policy constraints or governance controls set by the original operator. Internal activations of the intermediate representations created during a model’s ‘thinking’ process can reveal sensitive patterns: reasoning traces, hidden decision logic or even memorised parts of training data. In this way activations can serve as an information-rich side channel, which could be exploited to reverse-engineer how decisions are made or, worse, to subtly manipulate outcomes.

Protecting AGI is complex and layered for three main reasons. First, it involves scale, as AGI-class systems can have hundreds of billions or trillions of parameters and any protection must operate without reducing performance or usability.

Second, AGI functions across a broad and growing range of platforms – from cloud GPUs to edge devices in vehicles, robots or embedded systems – introducing multiple trust boundaries and operational environments. A model can remain secure on a protected server, but can become vulnerable once distributed to an endpoint outside full control.

Third, inference itself is a significant challenge. Even if weights are encrypted they often need to be decrypted and loaded into memory to run, creating a window where attackers can observe, dump or probe sensitive information.

Securing AGI

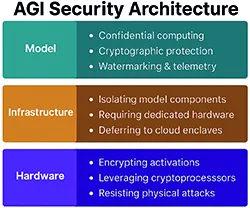

One promising area is confidential computing, where models operate within hardware-backed secure enclaves. The aim is to safeguard model weights and sensitive computations even from privileged system software, including root-level attackers or malicious administrators. Theoretically this enables AGI models to run in cloud environments or even on devices without revealing raw weights. In practice, current enclave implementations still face significant challenges, namely limited secure memory, susceptibility to certain side-channel attacks and noticeable performance overhead. Consequently, confidential computing is often most effective for critical components (such as key layers, safety modules or high-value submodels) rather than for full AGI-scale deployments, at least with existing hardware.

Figure 2: AGI security controls maps to three practical domains: model-level protections, infrastructure isolation and hardware-rooted trust

A second approach is cryptographic inference, which includes techniques such as homomorphic encryption and other privacy-preserving computation methods. The long-term goal is ambitious: to ensure that the model or data is never exposed in plaintext during operation. Fully homomorphic workflows are still computationally intensive and often impractical for real-time or large-scale inference. A more practical solution is hybridisation. This is applying strong cryptographic protections to the most sensitive steps (such as specific layers, routing functions or memory reads), combining them with encrypted caching and secure key management, and accepting controlled tradeoffs between latency and exposure.

A third approach focuses on watermarking and fingerprinting, that is, embedding durable, hard-to-remove signatures into model weights or behaviours. These techniques rarely prevent theft but, but they can provide proof of ownership, detect misuse and trace stolen copies. Traceability can act as a valuable deterrent and enforcement tool.

Setting boundaries

Another practical approach gaining popularity is model splitting (or partitioning). The idea is to keep the most sensitive parts of the system within a trusted boundary, such as secure hardware, protected enclaves or controlled server-side execution, while exposing only less-sensitive functions externally. This reduces the impact of any single breach and limits the attacker’s ability to replicate the entire system. The AGI becomes a distributed system where core intelligence is protected and peripheral components are designed to be replaceable or present a low risk if leaked.

Defences are not purely technical, they also require operational oversight. If a model is offered via an API or service, telemetry and behaviour analysis can detect suspicious interaction patterns. Overly broad or systematic queries, unusual access times, repeated boundary-probing or abnormal entropy in prompts can indicate extraction attempts. Once detected, systems can trigger alerts, enforce rate limits, restrict higher-risk capabilities or dynamically direct requests to more secure model versions. These measures do not eliminate the threat, but they can significantly raise the cost of model theft and reduce potential impact.

Security must be maintained during training, not just during deployment. AGI systems learn from their training environment, meaning data, subtle manipulations or intentionally crafted artefacts can create back doors that trigger under specific conditions. Protecting the training process involves dataset integrity controls, provenance tracking, continuous monitoring of training dynamics and adversarial testing throughout model development. Often identifying vulnerabilities early before weights are deployed can make the difference between manageable risks and systemic exposure.

Adaptive security

An emerging idea is adaptive security, where the AGI system actively defends itself. As systems become more autonomous they might detect unusual internal states, identify corrupted weights, recognise systematic probing (including side-channel patterns) and respond by tightening policies, changing execution paths or increasing protections. This adds a dynamic layer of resilience – an internal ‘security meta-awareness’ that helps prevent exploitation.

Strong legal frameworks, industry standards and credible auditing mechanisms will be essential. Standards and governance approaches such as the National Institute of Standards and Technology AI Risk Management Framework and regulatory regimes such as the EU AI Act provide a starting point, but enforcement will be difficult unless organisations develop security tools that ensure explainability, accountability and traceability from the beginning.

The threat is not just about losing intellectual property, but also about the danger of releasing powerful capabilities to untrusted individuals. Unlike typical data breaches, which can sometimes be fixed by password resets, key rotations or account controls, a leaked AGI model cannot be simply ‘reset’. It can be copied, modified, fine-tuned, combined with other systems and widely redeployed in ways the original developers never intended.

Security must be a fundamental design principle, not an afterthought, integrated into every stage from training and deployment to runtime, monitoring and governance. If the goal of AGI is to serve humanity, the main focus should be on safeguarding it from misuse, manipulation and malicious capture.

What is AGI?

Artificial general intelligence is the hypothetical technology that can allow a machine to learn, reason and problem-solve like a human and without training. (Today’s ‘narrow AI’ learns by using algorithms or pre-programmed rules to guide actions and learn what to do for specific tasks.)

The goal for AGI is to develop self-awareness and perform tasks that it has not been trained to do, allowing it to adapt to surroundings and developing situations.

Today AGI is theoretical, but author Isaac Asimov foresaw a self-aware, conscious intelligence in robots in his 1942 novel Runaround. In this story he introduced his Three Laws of Robotics:

1 A robot cannot harm a human or, through inaction allow harm to come to a human.

2 A robot must obey human orders, unless this conflicts with the first law.

3 A robot must protect itself, unless this conflicts with the first or second law.

In theory AGI can complete new tasks without training and perform creative actions.

Electronics Weekly

Electronics Weekly