FuriosaAI has received the first delivery of 4,000 units from TSMC and ASUS, and the device is available either as a standalone PCIe card or a turnkey server.

“With RNGD now shipping, we are giving enterprises the power to run the most advanced LLMs and agentic AI at scale, without the massive energy and infrastructure penalties of legacy solutions,” says FuriosaAI CEO June Paik, “the industry needs a high-performance alternative that works in the racks you have today.”

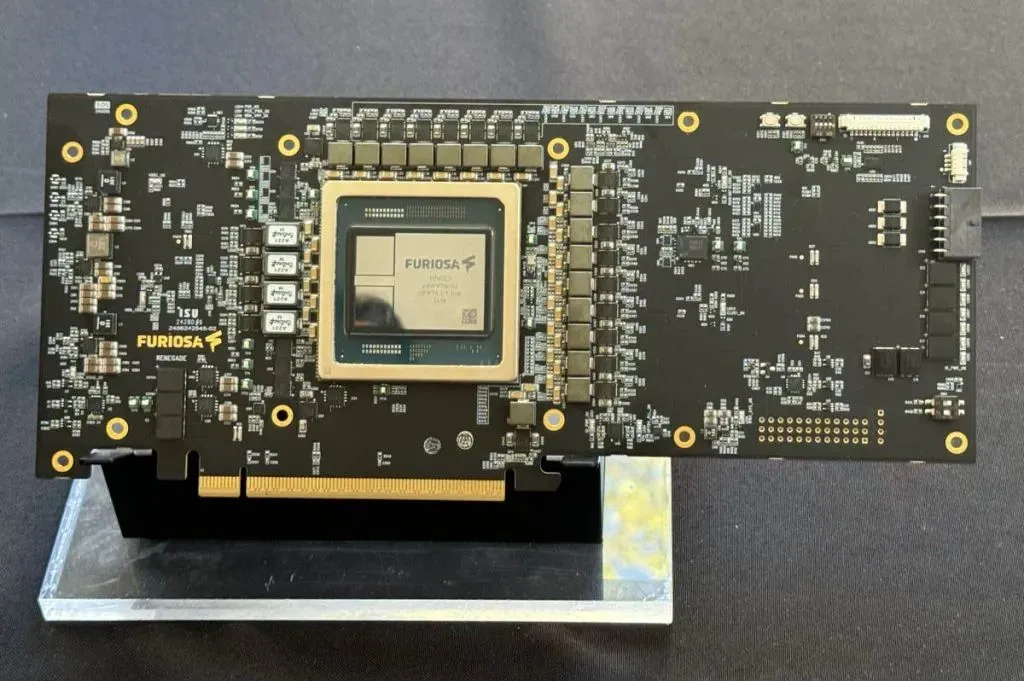

RNGD is a datacentre inference accelerator for advanced language models and generative and agentic AI. It delivers both performance (512 INT8 TFLOPS) and energy efficiency.

FuriosaAI has received LG AI Research’s EXAONE adoption and held public demonstration of gpt-oss models in collaboration with OpenAI in the second half of last year.

RNGD claims to deliver 3.5x greater compute density (throughput per rack) than H100-based systems in standard environments. RNGD is now available in two form factors:

RNGD PCIe Card: A drop-in accelerator delivering frontier model performance at a strict 180W TDP.

NXT RNGD Server: A plug-and-play, 4U rackmount server housing 8 RNGD cards. Because the system draws 3kW, you can stack five NXT RNGD Servers in a single standard air-cooled rack, delivering 20 petaFLOPS (INT8) per rack.

RNGD is supported by an SDK which provides advanced optimisation techniques, such as inter-chip tensor parallelism, and support for popular models such as Qwen 2 and Qwen 2.5.

The FuriosaAI SDK offers torch.compile support, a drop-in replacement for vLLM, and OpenAI API compatibility, enabling developers to move quickly with minimal changes to their existing code.

Precompiled artifacts on the Hugging Face Hub now support context lengths up to 32K tokens, enabling more complex and context-aware applications.

FuriosaAI optimised OpenAI’s 120B parameter GPT-OSS model to run on two RNGD cards.

Electronics Weekly

Electronics Weekly