Distributors can play a key role in helping engineering teams discover and evaluate new solutions: from concept to production, says Alex Iuorio. The technology behind edge AI is not a simple plug-in upgrade. The applications that use edge AI (and there are few that can’t) are using it in their own way. Distributors understand these disruptions and the key applications ...

AI

The support or use of AI (artificial intelligence) in electronics, including ML (machine learning), whether in software (supervised, unsupervised or reinforcement learning tools) or hardware (accelerators, GPUs, etc).

The AI native’s approach to edge AI in the IoT

Edge AI is a pivotal force in embedded computing systems, writes Nebu Philips, as he considers the elements required for comprehensive AI computing for the IoT. The need and opportunity for edge AI in the IoT is clear and significant, but there are challenges. To date, the industry is characterised by a complex and heterogeneous solutions landscape, repurposed silicon and ...

Record Q3 for Nvidia

Nvidia had Q3 revenue up 22% q-o-q and up 62% y-o-y at $57bn with a 73% margin. Q3 profit was $31.9bn, up 65% y-o-y. Some 61% of the Q3 revenue came from four customers, three of which are thought to be MS, Meta and Oracle. “Blackwell sales are off the charts, and cloud GPUs are sold out,” said CEO Jensen ...

Imec tool revolutionises datacentre design

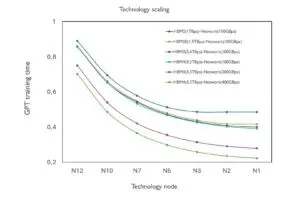

Imec has launched imec.kelis, an analytical performance modelling tool designed to revolutionise the design and optimisation of AI datacentres. Early adopters are already experimenting with the tool. The AI datacentre landscape is undergoing rapid transformation. As workloads scale to trillions of parameters and energy demands surge, system architects face mounting pressure to balance performance with sustainability and cost. Traditional simulation ...

PIC 1,000-times faster than Nvidia GPUs

A photonic IC that claims to perform AI tasks 1,000-times faster than Nvidia’s GPUs has been made by China’s Chip Hub for Integrated Photonics Xplore (CHIPX), reports the South China Morning Post. CHIPX, based in Wuxi, is affiliated with Shanghai Jiao Tong University and Turing Quantum, a Shanghai startup. The fab has installed a thin-film lithium niobate (TFLN) pilot line ...

Blue Skies For Clouds

The AI boom is good news for the cloud services providers with Amazon’s $38 billion partnership with OpenAI helping it stay ahead of the pack. The partnership enables OpenAI to run its AI workloads on AWS’s cloud infrastructure, giving the ChatGPT parent access to hundreds of thousands of Nvidia GPUs. In Q3 AWS had a 29% market share in the ...

Softbank dumps Nvidia

SoftBank has sold its entire stake in Nvidia – 32 million shares – for $5.83 billion. At a news conference in Tokyo earlier today Softbank CFO Yoshimitsu Goto (pictured) said that the sale was part of its “divesting and reinvesting” strategy. “Our investment in OpenAI is significant, so we plan to use some existing assets to help fund it,” said ...

AI boosts telecom EBITDA

Telecoms companies are deploying AI across network operations, customer service, and fraud prevention to drive efficiency and reduce costs, according to IDC. These initiatives are already contributing to EBITDA margin gains, with predictive maintenance and automated support systems leading the way. AI also enables personalised offerings and dynamic pricing, boosting ARPU and reducing churn. Fraud detection systems enhanced by AI ...

Open Cosmos completes MANTIS mission

Open Cosmos, the company using space data to solve global challenges, has completed its MANTIS mission – a two-year programme that delivered Earth Observation data. Launched on 11 November 2023 aboard SpaceX’s Transporter-9, MANTIS was the first ESA InCubed satellite, backed by the UK Space Agency, and carried Satlantis’s iSIM90 high-resolution camera and a reconfigurable AI processor from Ubotica. Over its ...

JEDEC standard targets LPDRAM modules for data centre AI applications

JEDEC is nearing completion of a standard for low-profile LPDRAM modules developed specifically for data centre AI applications, it reports. It is the JESD328: LPDDR5/5X Small Outline Compression Attached Memory Module (SOCAMM2) Common Standard. The association says JESD328 is designed to provide a memory platform to deliver modular, low-power, high-bandwidth memory for AI CPU servers. Specifically, the new standard will ...

Electronics Weekly

Electronics Weekly