The technology behind edge AI is not a simple plug-in upgrade. The applications that use edge AI (and there are few that can’t) are using it in their own way. Distributors understand these disruptions and the key applications they impact, proving themselves to be a key partner in helping engineers navigate these changes.

The technology behind edge AI is not a simple plug-in upgrade. The applications that use edge AI (and there are few that can’t) are using it in their own way. Distributors understand these disruptions and the key applications they impact, proving themselves to be a key partner in helping engineers navigate these changes.

Figure 1 shows the adoption rates for embedded AI-enabled functions in process automation.

The identifying feature of embedded electronic design is optimisation. Every component on a board has been selected because it is, systemically, the best fit, and every function in a design contributes to the overall solution. This solution-first approach to design requires a good understanding of the technologies that can be leveraged to implement each function.

Figure 1: According to the recent Avnet Insights report ‘Embracing Artificial Intelligence’, engineers expect to see the biggest uptake of AI in process automation

Conventional technologies used to implement functions, such as microcontrollers, have followed the trajectory of the semiconductor fabrication industry. There has been little to disrupt that trajectory, beyond the occasional hurdle associated with cost reduction. Fundamentally, the structure of integrated circuits has remained constant, benefitting from geometry development. Edge AI changes that.

AI at the edge has forced semiconductor manufacturers to rethink the way they design ICs. Even on mainstream process nodes, conventional architectures can deliver useful edge AI and machine learning (ML), but not always without impacting system-level performance. To maintain design optimisation, the complex vector processing needed to execute edge AI in resource-limited hardware requires new architectures.

Investigating new approaches

Edge AI is changing the way engineers think. New architectural approaches are leading to entirely new functionality enabled by AI and ML. Adding new functionality will complement existing features, extending capabilities.

This outlines the two ways AI is impacting the embedded electronics industry and changing the way the same functionality can be delivered, while also enabling new functionality that could not be offered before. OEMs must first investigate what their customer base is ready to accept and then determine how best to deliver that.

Distribution partners that provide technical support can assist OEMs in exploring the technology available, developing a proof of concept and moving through the design cycle. This ultimately culminates in long-term support for volume production.

Redefining the design environment

Working with AI and ML starts with understanding the training and inferencing stages of development. Training is highly dependent on the data, while inferencing is more reliant on the hardware used to run the model. Moving from a trained model to a deployable inference engine is a crucial step, which cements design choices.

Many semiconductor manufacturers now offer software solutions, either home-grown or through a partner, which can help train a model on your own data and then port that model to the hardware platform. These tools can often also accept pre-existing models from third-party sources, trained using other software.

Many device manufacturers are building repositories of pretrained models that can be retrained on a customer’s data. There are many sources of pretrained models to choose from (for example, Hugging Face now has more than two million models), but not all are suitable for deployment at the edge.

Models typically comply with a framework, which is often open source (leading frameworks include PyTorch and TF-Lite). Formats exist to make interoperability between frameworks possible, such as the Open Neural Network Exchange. This approach promotes design freedom, but at some stage in the process the engineering team must choose a specific hardware platform for deployment.

This is analogous to using programmable logic. At a high level, such as the hardware description language, a design has no dependencies on the target platform. As the design evolves it will develop dependencies, and at the place and route stage it is completely dependent on the target.

Distributors have close relationships with all the leading semiconductor vendors and have expertise in suppliers’ AI and ML technologies. Field application engineers can help engineering teams assess those technologies and make design choices at the right stage in the development cycle.

Multi-modal AI at the edge

Multi-modal AI is changing the way embedded systems are conceived, as using AI to process multiple types of data at the same time, for the same purpose, will have the biggest impact on embedded system topologies .

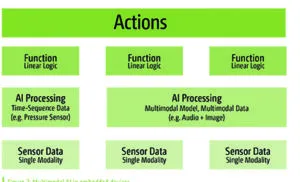

Figure 2: Multimodal AI in embedded devices

Historically, embedded electronics design was typically about closed loop control systems. This is particularly true for the industrial vertical, where automation relies on precise, repeatable actions that are monitored and controlled using dedicated sensors. Each sensor would monitor an action and each action would have its own control circuit. These systems could be described as multi-modal because they combine functions controlled by data provided by different types of signal transducers.

Systemically, each control circuit delivers part of the overall function. Every possible action in that function would be pre-determined in sequential code – no surprises, no variation, but also, no flexibility. The number of actions is limited not by what the data can provide but by how the system can react. Any data that does not fit an action is ignored.

Using AI, the number of acceptable actions increases, limited only by the model’s size and training. Crucially, that means similar but different conditions no longer need to be classed as the same. The rounding errors are removed.

Design optimisation comes from choosing the right size model for accuracy, restricted by the resources of the hardware platform. The optimisation of that platform will depend on the performance needed from the model, weighed against the commercial limitations (for example, cost, size, power) of the design. Having access to engineers fluent in these co-dependent design choices can help OEMs select the most appropriate technologies.

That same approach can be applied to data across all modalities, such as audio, environmental or image sensor data. This introduces powerful new capabilities to detect conditions that are aggregated using data from different sources.

There are several ways multimodal AI can be implemented. The enterprise approach is to train a single model on multiple data types (this is already in evidence from cloud services hosted in datacentres).

The embedded approach may be to run multiple models on multiple processors, or smaller models on a single processor with multiple cores. Cascading AI models involves using previous inferences as triggers or inputs for later stages. Another approach would be to combine multiple hardware-based solutions in one design. Figure 2 shows how embedded devices will harness multi-modal AI to bring new functionality to every vertical sector. Combining several types of AI and ML in a single device will become the standard approach.

In this illustration, the sensor example is apt for embedded systems and is a good illustration of how a distributor working with all the leading semiconductor vendors can be a valuable partner. Device manufacturers are implementing AI and ML in various ways, from dedicated cores, AI hardware accelerators and ML cores integrated directly into sensors. Working with a team of engineers that has visibility into all the options and solutions currently available can help OEMs make better design choices at the right stage.

Beyond industry 4.0

Industrial automation is a key application for edge AI. AI will be at the centre of the fifth industrial revolution, where the focus shifts from machinery towards people, productivity and sustainability. Embedded electronics will remain the foundation of industrial automation and edge AI will be fundamental to Industry 5.0.

The shift will create some turbulence in the industry as business owners navigate the various drivers for change, engineers discover the challenges of transitioning to Edge AI and teams explore the unfamiliar.

Distributors can support OEMs with dedicated engineers, experienced with the newest AI and ML solutions from across the industry, allowing OEMs to react quickly and benefit from an ‘early mover’ advantage.

Electronics Weekly

Electronics Weekly