The many cores are shaped and spaced inside a single fibre to enhance specific interactions.

“The fibre enables to perform several types of functions which are essential to neural-networks computation,” company CEO and co-founder Eyal Cohen told Electronics Weekly. “Out of many possibilities, these functions can be set and optimised to specific parameters using external amplification light that is injected into the fibre along with signal light [at a different wavelength].”

In the concept, external opto-electronics pulses data into the fibre at high speed, and reads the result. Pulses are delivered at 500MHz per channel, limited by the need to maintain synchronisation.

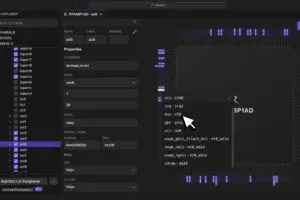

The neural network is trained using gradient-descent, and the “result is a multi-layer perceptron that can be used as a classifier, an auto-encoder or a fully connected layer,” said Cohen. “An FPGA implements the training algorithm, sets the parameters, and changes them according to the gradient, so the advantage [over a pure electronics solution] is only at the speed of data injection. However, parameters are static during inference, and the only limiting factor is the data injection speed – the difference in performance is large.” He added that there might be a point, perhaps with 400 input channels, where training might also be quicker.

The company, founded in 2018, recently raised $6m in series-A funding, lead by Chartered Group.

Electronics Weekly

Electronics Weekly