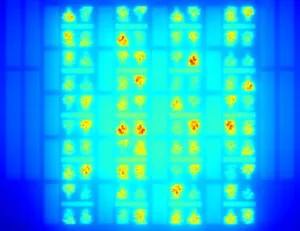

The data comes from a thermal STCO (system-technology co-optimization) study of integrating high-bandwidth memory (HBM) on a GPU by stacking, which the lab describes as a promising compute architecture for next-gen AI applications.

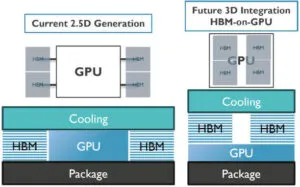

It has compared this ‘3D’ stacking with the current ‘2.5D’ generation, of a GPU alongside stacks of memory die (see diagram).

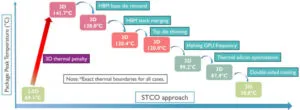

“By combining technology and system-level mitigation strategies, peak GPU temperatures could be reduced from 140.7°C to 70.8°C under realistic AI training workloads – on par with current 2.5D integration options,” it said. “The result demonstrates the strength of co-optimising the knobs at all the different abstraction layers.”

Stacked integration will allow up to four GPUs per package.

“However, the aggressive 3D integration approach is prone to thermal issues because of higher local power density and vertical thermal resistance,” said IMEC.

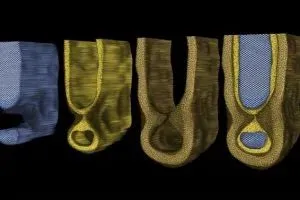

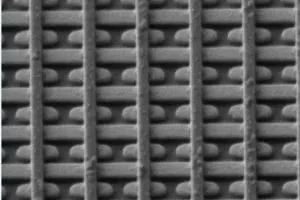

Four memory stacks were modeled, each consisting of twelve hybrid-bonded dram dies, micro-bumped directly on top of the GPU die (see diagram).

141.7°C was the hot-spot temperature with top-side cooling and without thermal mitigation, which compared with 69.1°C for a 2.5D integration model for the same die with the same cooling.

In search of improvement, physical thermal modifications included removing the base die from the memory, merging the memory stacks and thinning the top die (diagram right).

In search of improvement, physical thermal modifications included removing the base die from the memory, merging the memory stacks and thinning the top die (diagram right).

“Combining two square HBMs into a single rectangular HBM with 2:1 aspect ratio avoids having mould compound between them, so is thermally beneficial to the GPU placed underneath,” Imec programme director James Myers told Electronics Weekly. Also, “we found that a narrow piece of thermal silicon at edges of the GPU was beneficial to avoid thermal hotspots there. To make room, we moved the HBMs inwards a little”.

Double-sided cooling was added and, beyond this, the operating frequency had to be divided by two to approach 2.5D temperature.

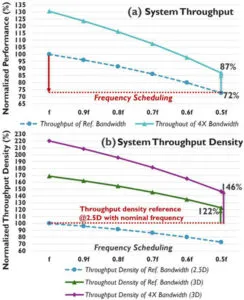

a) performance-throughput degrades at lower frequency, partially recovered by 4x bandwidth increase

b) throughput per package area improves thanks to a smaller footprint

(excludes benefits from additional GPUs per package)

“Halving the GPU core frequency brought the peak temperature from 120°C to below 100°C,” said Myers. “Although this step comes with a 28% slow-down of AI training steps, the overall package outperforms the 2.5D baseline thanks to a higher throughput density offered by the 3D configuration. We are currently using this approach to study other GPU-memory configurations – placing GPUs on top of memories, for example.”

Imec operates by collaborating with industry. It is currently seeking partners, including fabless and system companies, to join ‘XTCO’, a programme examining cross-technology co-optimisation towards thermally-robust advanced computing systems.

The memory-on-GPU investigation was “the first time that we demonstrate the capabilities of Imec’s XTCO) program”, said the lab’s v-p of logic technologies Julien Ryckaert.

Electronics Weekly

Electronics Weekly